An MQTT sensor for reptile temperature and humidity

To mantain the best climatic condition for a reptile needs alot of experience and especially if you are, like me, a newby it’s better to help yourself with the proper technology.

For this reason I’ve designed a very simple MQTT enabled sensor able to be rapidly integrated with your domotic system or simply used as standalone temperature and humidity monitor.

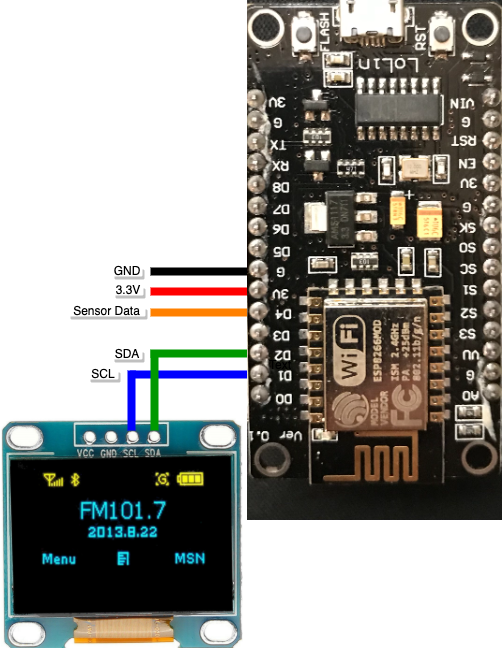

It’s based upon a very simple circuit where a DH22 sensor is connected to an ESP32 controller, the data collected by the sensor are both sent to an MQTT server (configurable) and an Oled display.

Connection is very simple:

The ESP32 could be easily flashed using Arduino

The full source code for this project is available here

Domotic Energy Meter

Integrate power metering to a domotic system is very useful not only for statistic and economic reason but either to promptly recognize and manage overload and prevent automatic shutdown from the smart meter.

There a lot of project in the net using Arduino or Rasperry PI.

What I’m looking for is:

- Power sensor that could be easily integrate with new function

- Fully programmable over LAN without a physical access over USB port or SD card

- Capable to be as much autonomous has possible. If the domestic server is temporary down I need to read actual power consumption or maybe integrate locally controlled power relays

Arduino based project are very cheap and reliable but if you need to modify you firmware it’s difficult to do this without a physical access to the board to reload the firmware.

Raspberry PI is very versatile and is fully programmable over the LAN but in my opinion the number of logic port is very poor (I assume this is my opinion please RaspberryPI enthusiast don’t feel offended 🙂 )

So why don’t use BOTH of them?

This project take the best from Arduino and RaspberryPI in order to have the expandability and easy to manage logic port of an Arduino and the versatility in term of integration and remote programming of a RaspberryPI

See full instruction in https://www.instructables.com/Domotic-Energy-Meter/